What initially appears to be a legal dispute between an American AI company and a Chinese competitor may in fact signal a structural shift in how artificial intelligence is protected, valued, and deployed geopolitically. OpenAI has warned U.S. lawmakers that the Chinese AI firm DeepSeek may be using advanced techniques to functionally reconstruct Western frontier models through so-called model extraction. This is not an accusation of classic source code theft, but something more subtle — and potentially more consequential: the systematic reconstruction of intelligence through interaction.

The implications are significant. If advanced AI systems can be analyzed and replicated through large-scale API interaction, the core value of AI shifts away from tangible infrastructure — chips, datacenters, training clusters — toward something far harder to defend: behavioral logic, reasoning structure, and output patterns.

Why This Matters Now

The first phase of the AI race was largely decided at the infrastructure level. The leading players secured access to capital, compute, and elite research talent at a scale few could match. But if competitive advantage can now be narrowed through large-scale behavioral analysis of deployed models, then technological dominance becomes less about who can train the biggest system and more about who can protect it once it is deployed.

In that scenario, export controls, chip restrictions, and hardware bottlenecks lose part of their strategic leverage. What begins as a company-level dispute may ultimately redefine how nations think about digital sovereignty and intellectual property in the AI era.

What Model Extraction Means in Practice

Model extraction — sometimes referred to as query-based distillation — describes a process in which a party does not have direct access to a model’s weights or training data, but attempts to reconstruct its behavior through systematic interaction. Instead of copying code, an actor submits thousands or even millions of carefully structured prompts, records the outputs, and then uses those outputs as training data for a new model.

This is not a theoretical concern. As early as 2016, researchers from institutions such as Cornell and UC Berkeley demonstrated that commercial machine learning APIs from companies like Amazon and Microsoft could be approximated with surprising accuracy simply by collecting enough input-output pairs. Later studies showed that even more complex classifiers could be reconstructed through adaptive querying strategies without internal access to the original model parameters.

Within generative AI, a related concept is distillation: smaller models are trained on the outputs of larger models to create more lightweight versions. Many companies use this technique legitimately to optimize deployment on mobile or edge devices. The tension arises when an external competitor systematically queries a frontier model at industrial scale in order to build a commercially competitive alternative without incurring the original training costs.

Imagine a model that required hundreds of millions of dollars in compute, exclusive GPU access, and years of research effort. If a competitor can reproduce comparable reasoning behavior through millions of structured prompts and build a lower-cost alternative, the economic value of the original system is indirectly extracted without any direct copying of source code. That is the core concern behind the current allegations.

For readers seeking a deeper technical foundation of how model architectures, training data, and neural networks function, our comprehensive guide to What Is Artificial Intelligence provides broader context on how these systems are built and why their internal structure matters.

From Chip Restrictions to Cognitive Protection

Until recently, AI competition centered on hardware. The United States restricted exports of advanced Nvidia H100 and H200 chips to China because those chips are essential for training frontier-scale models. China responded by accelerating domestic chip development and investing heavily in alternative infrastructure. That contest was visible, measurable, and physical.

Model extraction shifts the conflict to a more abstract layer. If hardware restrictions can be partially circumvented because a rival gains indirect access to a model’s behavioral intelligence through API interaction, then hardware control becomes only part of the equation. The strategic question evolves from who can train to who can observe, analyze, and reconstruct.

A useful analogy comes from the pharmaceutical industry. Through chemical analysis, competitors can sometimes approximate the composition of a drug without access to the original production process. Legally, this may fall within defined boundaries, but geopolitically it becomes sensitive when the technology in question is strategically critical. The same question now hangs over AI: should model behavior itself be protected in a manner comparable to patented compounds or copyrighted software?

Geopolitical Implications: AI as Strategic Infrastructure

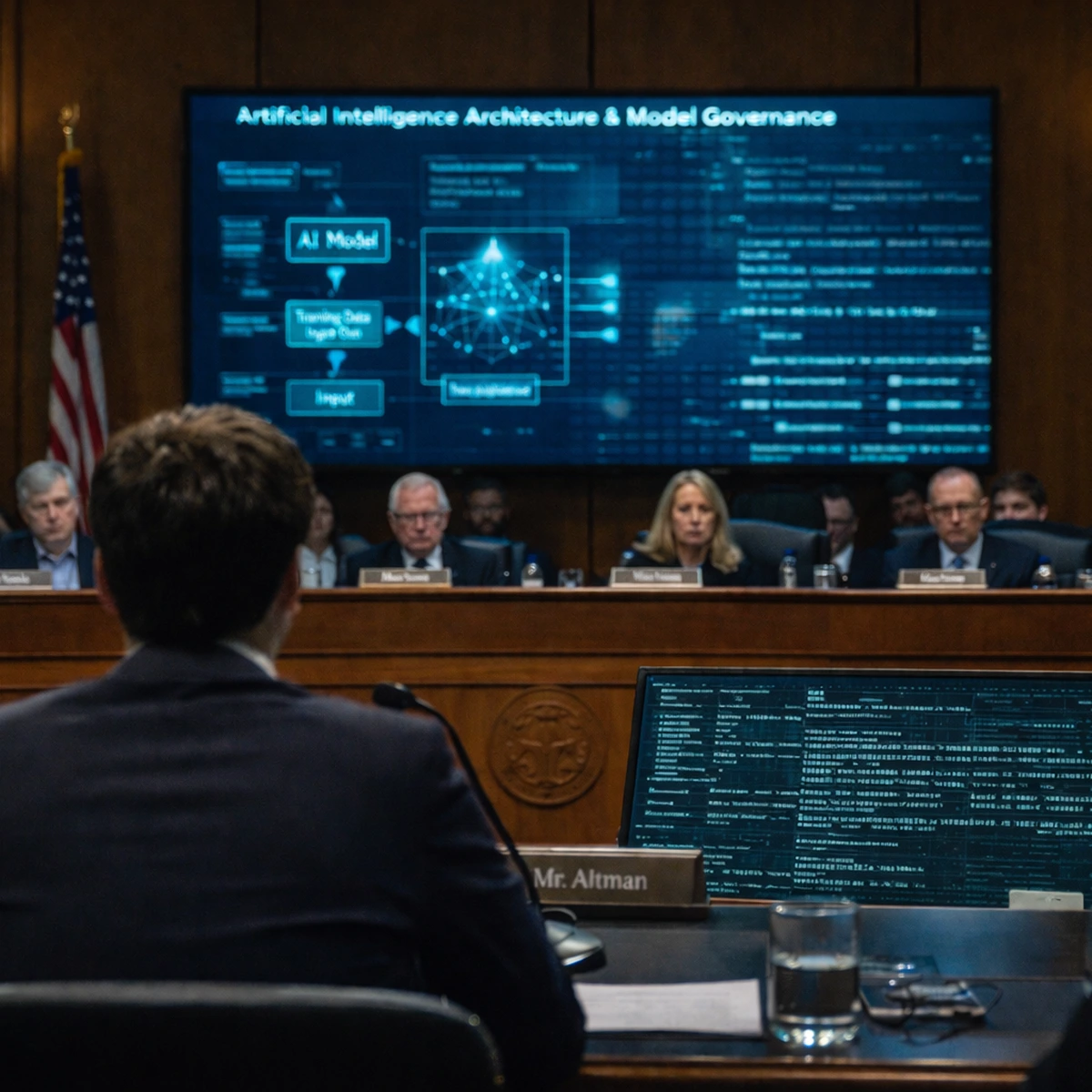

AI models are no longer mere commercial products; they function as productivity multipliers, dual-use defense technologies, and economic accelerators. When OpenAI raises concerns before U.S. lawmakers about possible extraction by a Chinese firm, it does so not only to protect corporate interests, but within a broader context of technological dominance.

China has explicitly prioritized AI self-sufficiency within its long-term strategic planning, while the United States positions frontier AI as a cornerstone of economic and military advantage. If model extraction proves scalable and effective, technological gaps could narrow more rapidly than policymakers anticipated, even without equal investment in compute infrastructure. That would directly affect the balance of power between AI ecosystems.

Europe, meanwhile, finds itself in a complex intermediary position. European companies rely heavily on U.S.-based frontier models for commercial applications, yet operate within a regulatory environment that is often more stringent than that of either the U.S. or China. At the same time, Europe lacks a fully autonomous frontier-model ecosystem at comparable scale. If AI ecosystems fragment into geopolitical blocs, Europe will be forced to make strategic decisions about dependency, interoperability, and long-term investment capacity.

This shift toward cognitive asset protection aligns with broader structural changes we have analyzed in our State of AI report, where AI increasingly functions as national infrastructure rather than merely private-sector software.

What Changes for Companies and Investors?

If allegations of model extraction are taken seriously at legal and policy levels, companies will respond with technical and contractual countermeasures. We can expect advanced detection systems for anomalous query patterns, dynamic output variation designed to reduce replicability, and stricter API terms supported by forensic monitoring. These measures increase operational costs and favor firms with sufficient capital to implement robust defensive layers.

For investors, valuation criteria may shift accordingly. Scale and model performance will remain critical, but defensive capability — the ability to protect cognitive assets — may become equally important. A new market segment focused on AI model security, behavioral watermarking, and authenticity verification could emerge, much as cybersecurity evolved into a major standalone industry once digital infrastructure became strategically vital.

At the same time, the gap between hyperscalers and smaller players may widen. Startups dependent on open API access could face tighter restrictions, higher costs, and more complex compliance requirements, potentially slowing innovation and accelerating consolidation.

The Fundamental Question: Can You Own Intelligence?

At its core, the dispute touches a foundational question of the AI era: can the behavior of a model itself be owned? Source code is protected by copyright, and training data can be contractually restricted. But model behavior — the way a system reasons and structures its answers — occupies a legal gray area.

If lawmakers determine that systematic model extraction constitutes intellectual property infringement, the AI market may consolidate around a limited number of heavily protected frontier providers. If, however, functional replication through interaction is deemed permissible so long as no direct code copying occurs, the competitive landscape changes dramatically, and the economics of AI become more fluid and aggressive.

What is increasingly clear is that the AI power struggle is evolving from a battle over hardware and compute to a battle over cognitive property. In this new phase, dominance will depend not only on who can build the most capable model, but on who can prevent their intelligence from being reconstructed through digital interaction.

Sources & Further Context

This analysis is based on public statements and reporting regarding OpenAI’s communication with U.S. lawmakers concerning potential model extraction practices by Chinese AI firm DeepSeek. The article also references established academic research on model extraction and query-based distillation techniques, including early demonstrations of API reconstruction attacks conducted by researchers from Cornell University and UC Berkeley.

Additional context on AI hardware export controls, Nvidia H100/H200 chip restrictions, and U.S.–China AI competition is drawn from publicly available policy briefings and semiconductor trade reporting.

For broader structural analysis of global AI power dynamics, see our related research in the State of AI 2025 report.